ChatGPT is a hot topic. Tom Scott compared it to a “Napster moment”, when suddenly everyone started sharing music and videos, Jonathan Stark called it the iPhone effect, when everyone started using a mobile device everywhere, and Bart Lacroix compared it to the first time using Google or Spotify: “I opened [ChatGPT] in my browser as a tab and never closed it since.”

Some practical examples of using ChatGPT as a tool to run an organisation:

As a tireless writing assistant

A common thread in how people use ChatGPT is as a writing assistant, even to write software.

In their podcast, Jonathan Stark and Rochelle Moulton say it “can be like having a sort of infinite number of (free) interns.”. Its output can be a good first version, but you definitely need to check for factual accuracy.

It can get you over the “blank page” hurdle: editing a draft is easier than coming up with the draft in the first place. ChatGPT’s output is bland, it lacks a “voice”, and you probably want to add other points of view as well. But: you have a start.

As a CEO co-pilot

Bart Lacroix takes it even further, and used ChatGPT across the whole organisation: "the whole internet up to 2021" is in there, so feed it a few market research reports published after that, and let it help you map trends, analyse competitors, and create a new value proposition. Then, let it write new copy for your website, and fill your marketing content calendar with ideas and drafts. Have it look at legal documents. Create sales scripts. Help the developers.

Working as a CEO at a SaaS scale-up used to consist of 1% inspiration and 99% perspiration. Now it’s better balanced for me, allowing me to use more of my ideas and creativity while letting #ChatGPT do the hard work.

Read Bart’s interactions with ChatGPT on LinkedIn.

The secret sauce of the tool

ChatGPT is based on a Large Language Model, GPT. That model can do all kinds of language generation. ChatGPT uses the model to generate text in a form that looks like a conversation, responding to your input. There are more ways to integrate GPT in other applications.

Training the Large Language Model is basically a race in huge computing resources, with companies like OpenAI, Microsoft, and Google. But the real magic happens in how the model is embedded in an application. Jonathan Stark, in the podcast above, thinks the secret sauce is the guard rails for such an application: what sort of text is the model allowed to generate?

The results and potential legal issues of a model also depend on what information is used for a model’s training.

-

When working with "everything on the web", the model will process data that falls under the European GDPR laws. To process such data, you have to have a legal basis and a specific purpose, and it is unlikely that a generalist language model can comply with that. (Arnoud Engelfriet wrote about this, in Dutch.)

-

John Nay of Stanford Law School describes ways to use such a model as lobby assistant, focused on analysing US legal documents, and providing draft lobby texts if a bill affects your company.

-

When interacting with ChatGPT, anything you enter will be stored and possibly used for future training purposes. Therefore be careful with entering any confidential or personal information.

The actual secret sauce for now is the user of the tool: with well-crafted prompts and sufficient judgement, it is a useful tool.

How Large Language Models actually work

I wanted to have a better understanding of how a language model is trained, and why it seems to show understanding of topics, while making up "facts" with the same ease.

Steve Seitz made a couple of short videos that explains what a large language model actually is, beyond "it’s a statistical way to generate sentences". (He also has two short videos on image generators.)

Here is half an hour to get you up to speed with how your new text and image assistants work:

What comes next?

I see two technical developments that will further change the landscape:

-

The models are becoming more complex, allowing for even more nuanced and varied language processing. At the same time, the selection of training material will become better, and adding more up-to-date information in an incremental way will further speed up development of services like ChatGPT.

-

Apart from Large Language Models, there is also a lot of development in the area of Knowledge Graphs (remember the vision of a semantic web?). This is based on logic, facts, first principles, and rules of sound reasoning. Once these two paradigms are connected and synergy emerges, the tools built on that will be able to explain their arguments, and provide you with facts and references that really exist.

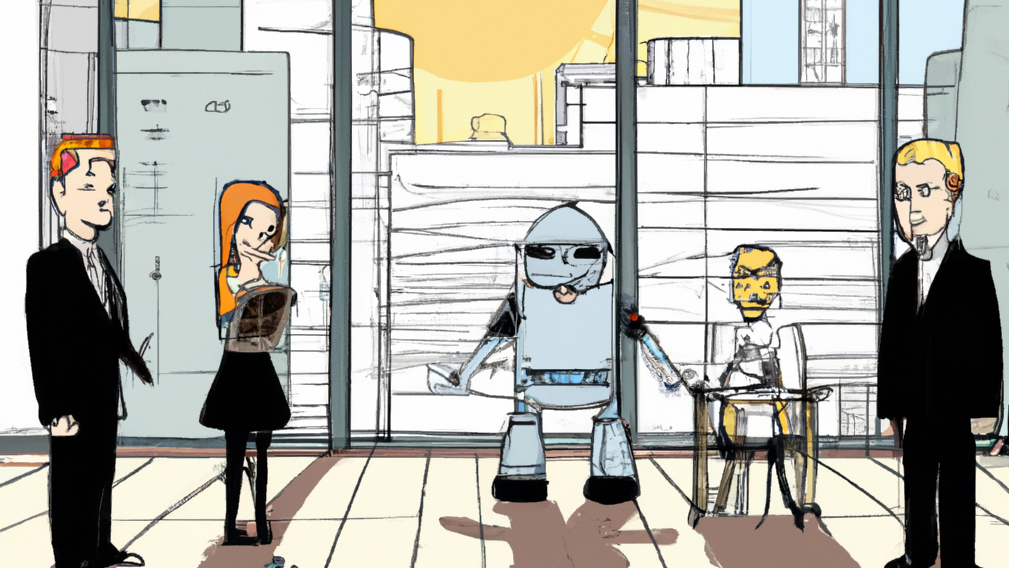

Image at the top: a cropped version of a DALL-E creation for “A graphic novel drawing that portrays a business owner who introduces a robot assistant as a new member of the team in an open office space to 5 office workers, in front of a window that shows as a backdrop a city skyline and a bright sun.”